VS-Quant: Per-vector Scaled Quantization for Accurate Low-Precision ...

2021年2月8日 · Quantization enables efficient acceleration of deep neural networks by reducing model memory footprint and exploiting low-cost integer math hardware units. Quantization …

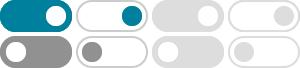

Quantized inference accelerates compute-bound operations, conserve memory bandwidth for memory-bound operations, and reduce on-chip storage size. VS-Quant extensions highlighted …

VS-QUANT: Per-Vector Scaled Quantization for Accurate Low

Quantization enables efficient acceleration of deep neural networks by reducing model memory footprint and exploiting low-cost integer math hardware units. Quantization maps floating-point …

We propose fine-grained per-vector scaled quantization (VS-Quant) to mitigate quantization-related accuracy loss. In contrast to coarse-grained per-layer/per-output-channel scal-ing, VS …

VS-Quant: Per-vector Scaled Quantization for Accurate Low

Quantization enables efficient acceleration of deep neural networks by reducing model memory footprint and exploiting low-cost integer math hardware units. Quantization maps floating-point …

Quanto:PyTorch 量化工具包 - Hugging Face

量化技术通过用低精度数据类型 (如 8 位整型 (int8) ) 来表示深度学习模型的权重和激活,以减少传统深度学习模型使用 32 位浮点 (float32) 表示权重和激活所带来的计算和内存开销。 减少位宽 …

SVDQuant - MIT 推出的扩散模型后训练量化技术 | AI工具集

SVDQuant是MIT研究团队推出的后训练量化技术,针对扩散模型,将模型的权重和激活值量化至4位,减少内存占用加速推理过程。 SVDQuant引入高精度的低秩分支吸收量化过程中的异常 …

VS-Quant:精确低精度神经网络推理的每向量缩放量化,arXiv - CS

2021年2月8日 · 量化可通过减少模型的内存占用并利用低成本的整数数学硬件单元来有效加速深度神经网络。 量化使用比例因子将经过训练的模型中的浮点权重和激活映射到低位宽整数值。 …

[2405.16406] SpinQuant: LLM quantization with learned rotations

2024年5月26日 · In this work, we identify a collection of applicable rotation parameterizations that lead to identical outputs in full-precision Transformer architectures while enhancing …

Quantification of DAS VSP Quality: SNR vs. Log-Based Metrics

2022年1月28日 · The signal-to-noise ratio (SNR) often drives the choice of distributed acoustic sensing (DAS) parameters in vertical seismic profiling (VSP). We compare this established …